The impact of the GIL shouldn't be too much of a bottleneck because the tasks are mostly IO-bound, consisting of babysitting requests on the air to wait for the response.

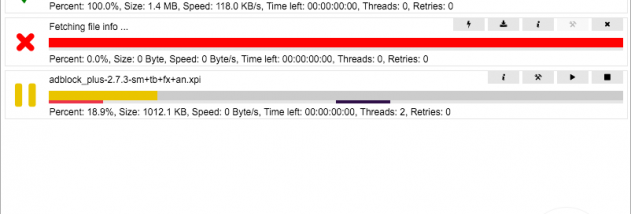

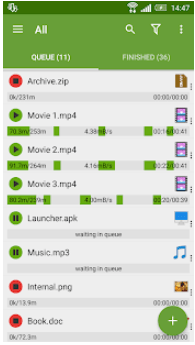

This page offers great examples of all three.įor the purposes of this post, I'll show multithreading. Each has tradeoffs respective to the task at hand and which you choose is likely best determined by benchmarking and profiling. Next, asyncio, multiprocessing or multithreading are available to parallelize the workload. So if you're making several requests to the same host, the underlying TCP connection will be reused, which can result in a significant performance increase (see HTTP persistent connection). It also persists cookies across all requests made from the Session instance, and will use urllib3's connection pooling. The Session object allows you to persist certain parameters across requests. Quoting Advanced Usage: Session Objects from the requests docs: The first step is to use requests.Session() when sending multiple requests to a single host. Turbo download manager save download for future downloading how to#This answer shows how to speed things up. The accepted answer is fantastic but the task is embarrassingly parallel there's no need to retrieve these sub-pages and files one at a time. I hope you can use this code without correction! Turbo download manager save download for future downloading full#(first you search links containing text 'download' in their description, then construct full url - concatenate hostname and path, and finally download file) Now, you should prepare function which will download files for you: def download_file(url):Īnd final magic is to repeat all previous steps preparing links for file downloader: host = ''įile_link = soup.find('a',text=re.compile('.*download.*')) You can do it by searching links containing 'chessgame' word in it. I strongly advice to use lxml parser, not common html.parserĪfter that, you should prepare game's links list: pages = soup.findAll('a', href=re.compile('.*chessgame\?.*')) Next, get index page and create BeautifulSoup object: req = requests.get("") I will show you a complete solution and comment this code.įirst, import necessary modules: from bs4 import BeautifulSoup The app supports multiple parallel downloads at the same time too.There is no short answer to your question. You can simply look for files using the built-in browser or enter the download link if you have one. The app includes its own web browser, which functions like any other web browser and makes the whole process a lot simpler. The app is capable of downloading different file types like APK, RAR, ZIP, MP3, DOC, XLS etc, with speed improvements by up to 3 times. Turbo download manager save download for future downloading for android#On devices running Android 5.0 or higher, files can also be saved directly to the SD card.ĭownload Manager for Android or Downloader, as it is popularly known, has over 10 million downloads on the Play Store, so it’s quite popular among users. The interface of the app is fairly simple and easy to use and it lets you download multiple files at the same time, retrieve links from browsers directly, optimize buffer size, pause/resume downloads anytime and more. The makers of the app report increase in speeds by around 5 times, which is not very realistic in our opinion but the app does boost download speeds by increasing the number of connections or threads. Turbo Download Manager is another app that can substantially increase your download speed, whilst also managing multiple downloads.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed